Application Stack

Legacy application stack hosted on the VMs in the On-Prem data centre can be easily moved to Amazon EC2 instances with lift and shift operations.

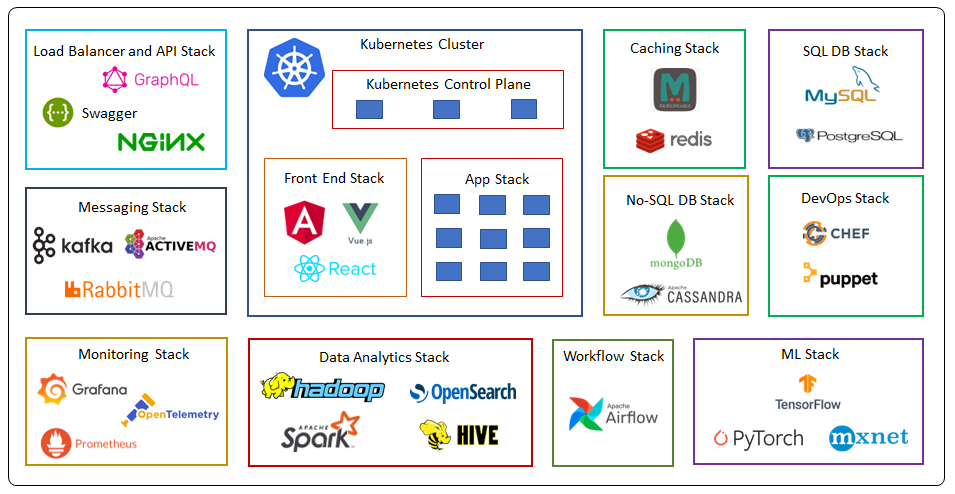

Kubernetes Cluster

Now a days many organizations are using Kubernetes to orchestrate their containerised microservices. In Kubernetes installation and management of control plane nodes and etcd database cluster with high availability and scalability becomes a challenging task. Added to it we also need to manage worker nodes on data plane where we run our applications.

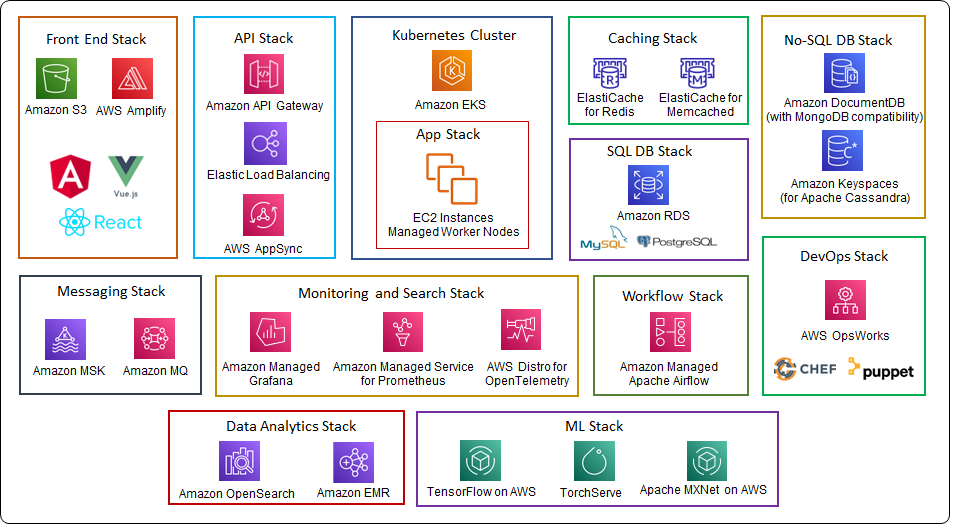

We can easily migrate our Kubernetes workloads to Amazon Elastic Kubernetes Service (EKS) which completely manages the controls plane and etcd cluster in it with high availability and reliability. Amazon EKS gives us three options with worker nodes, Managed Worker Nodes, Un-Managed Worker Nodes and Fargate which is a fully managed serverless option for running containers with Amazon EKS. It is a certified to CNCF Kubernetes software conformance standards, so we need not to worry about any portability issues. You can refer to my previous blog post on Planning and Managing Amazon VPC IP space in Amazon EKS cluster for best practices.

Amazon EKS Anywhere can handle Kubernetes clusters on your on-premises also if you wish to keep some workloads on your on-premises.

Front-End Stack

If we have front-end stack developed with Angular, React, Vue or any other static web sites then we can take advantage of web hosting capabilities of Amazon S3 which is fully managed object storage service.

With AWS Amplify we can host both static and dynamic full stack web apps and also implement CI/CD pipelines for any new web or mobile projects.

Load balancer and API stack

We can use Amazon Elastic Load Balancer for handling the traffic, Application Load balancer can do content based routing to the microservices on the Amazon EKS cluster, this can be easily implemented with ingress controller provided by AWS for Kubernetes.

If we have REST API stack developed with OpenAPI Specification (Swagger), we can easily import the swagger files in Amazon API gateway and implement the API stack.

If we have GraphQL API stack we can implement it with managed serverless service AWS AppSync, which support any back-end data store on AWS like DynamoDB.

If we have Nginx load balancers on on-premises, we can take them on to Amazon Lightsail instances.

Messaging and Streaming Stack

It is important to decouple the applications with the messaging layer, in microservices architecture generally messaging layer is implemented with popular open source tech like Kafka, Apache Active MQ and Rabbit MQ. We can shift these workloads to managed service on AWS which are Amazon Managed Streaming for Apache Kafka (Amazon MSK) and Amazon MQ.

Amazon MSK is a fully managed service for Apache Kafka to process the streaming data. It manages the control-plane operations for creating, updating and deleting clusters and we can use the data-plane for producing and consuming the streaming data. Amazon MSK also creates the Apache ZooKeeper nodes which is an open-source server that makes distributed coordination easy. MSK serverless is also available in some regions with which you need not worry about cluster capacity.

Amazon MQ which is a is a managed message broker service supports both Apache ActiveMQ and RabbitMQ. You can migrate existing message brokers that rely on compatibility with APIs such as JMS or protocols such as AMQP 0-9-1, AMQP 1.0, MQTT, OpenWire, and STOMP.

Monitoring Stack

Well known tools for monitoring the IT resources and application are OpenTelemetry, Prometheus and Grafana. In many cases OpenTelemetry agents collects the metrics and distributed traces data from containers and application and pumps it to the Prometheus server and can be visualized and analysed with Grafana.

AWS Distro for OpenTelemetry consists of SDKs, auto-instrumentation agents, collectors and exporters to send data to back-end services, it is an upstream-first model which means AWS commits enhancements to the CNCF (Cloud Native Computing Foundation Project) and then builds the Distro from the upstream. AWS Distro for OpenTelemetry supports Java, .NET, JavaScript, Go, and Python. You can download AWS Distro for OpenTelemetry and implement it as a daemon in the Kubernetes cluster. It also supports sending the metrics to popular Amazon CloudWatch.

Amazon Managed Service for Prometheus is a serverless, Prometheus-compatible monitoring service for container metrics which means you can use the same open-source Prometheus data model and query language that you are using on the on-premises for monitoring your containers on Kubernetes. You can integrate AWS Security with it securing your data. As it is a managed service high availability and scalability are built into the service. Amazon Managed Service for Prometheus is charged based on metrics ingested and metrics storage per GB per month and query samples processed, so you only pay for what you use.

With Amazon Managed Grafana you can query and visualize metrics, logs and traces from multiple sources including Prometheus. It’s integrated with data sources like Amazon CloudWatch, Amazon OpenSearch Service, AWS X-Ray, AWS IoT SiteWise, Amazon Timestream so that you can have a central place for all your metrics and logs. You can control who can have access to Grafana with IAM Identity Center. You can also upgrade to Grafana Enterprise which gives you direct support from Grafana Labs.

SQL DB Stack

Most organizations certainly have SQL DB stack and many prefer to go with the opensource databases like MySQL and PostgreSQL. We can easily move these database workloads to Amazon Relation Database service (Amazon RDS) which support six database engines MySQL, PostgreSQL, MariaDB, MS SQL Server, Oracle and Aurora. Amazon RDS manages all common database administration tasks like backups and maintenance, it gives us the option for high availability with multi-AZ which is just a switch, once enabled, Amazon RDS automatically manages the replication and failover to a stand by database instance in another availability zone. With Amazon RDS we can create read replicas for read-heavy workloads. It makes sense to shift MySQL and PostgreSQL workloads to Amazon Aurora if we have compatible supported versions by Aurora with little or no change, because Amazon Aurora give 5x more throughput for MySQL and 3X more throughput for PostgreSQL than the standard ones as it takes advantage of cloud clustered volume storage. Aurora can support scaling up to 128TB storage.

If you have SQL Server workloads and op to go for Aurora PostgreSQL, then Babelfish can help to accept connections from your SQL Server clients.

We can easily migrate your database workload with AWS Database Migration Service and Schema Conversion tool.

No-SQL DB Stack

If we have No-SQL DB stack like MongoDB and Cassandra on our on-prem data center the we can choose to run our workload on Amazon DocumentDB (with MongoDB compatibility) and Amazon Keyspaces (for Apache Cassandra). Amazon DocumentDB (with MongoDB compatibility) is a fully managed database service which can grow the size of storage volume as the database storage grows. The storage volume grows in increments of 10 GB, up to a maximum of 64 TB. We can also create up to 15 read replicas in other availability zones for high read throughput. You may also consider MongoDB Atlas on AWS service from AWS marketplace which is developed and supported by MongoDB.

You can use same Cassandra application code and developer tools on Amazon Keyspaces (for Apache Cassandra) which gives high availability and scalability. Amazon Keyspaces is serverless, so you pay for only the resources that you use, and the service automatically scales tables up and down in response to application traffic.

Caching Stack

We use in-memory data store for very low latency applications for caching. Amazon ElastiCache supports the Memcached and Redis cache engines. We can go for Memcached if we need simple model and multithreaded performance. We can choose Redis if we require high availability with replication in another availability zone and other advanced features like pub/sub and complex data types. With Redis you go for Cluster mode enabled or disabled. ElastiCache for Redis manages backups, software patching, automatic failure detection, and recovery.

DevOps Stack

For DevOps and configuration management we could be using Chef Automate or Puppet Enterprise, when we shift to the cloud, we can use the same stack to configure and deploy our applications with AWS OpsWorks, which is a configuration management service that creates fully-managed Puppet Enterprise servers and Chef Automate servers.

With OpsWorks for Puppet Enterprise we can configure, deploy and manage EC2-instances and as well as on-premises servers. It gives full-stack automation for OS configurations, update and install packages and change management.

You can run your cookbooks which contain recipes on OpsWorks for Chef Automate to mange infra and applications on the EC2 instances.

Data Analytics Stack

OpenSearch is an open-source search and analytics suite made from a fork of ALv2 version of Elasticsearch and Kibana (Last open-source version of Elasticsearch and Kibana). If you have workloads developed on opensource version of Elasticserach or OpenSearch then you can easily migrate to Amazon OpenSearch which is a managed service. AWS has added several new features for OpenSearch such as support for cross-cluster replication, trace analytics, data streams, transforms, a new observability user interface, and notebooks in OpenSearch Dashboards.

Big data frameworks like Apache Hadoop and Apache Spark can be ported to Amazon EMR. We can process petabyte-scale of data from multiple data stores like Amazon S3 and Amazon DynamoDB using open-source frameworks such as Apache Spark, Apache Hive, and Presto. We can create an Amazon EMR cluster which is collections of managed EC2 instances on which EMR installs different software components, each EC2 instance become a node in the Apache Hadoop framework. Amazon EMR Serverless is a new option which makes it easy and cost-effective for data engineers and analysts to run applications without managing clusters.

Workflow Stack

For Work flow management orchestration, we can port the Apache Airflow to Amazon MWAA which is a managed service for Apache Airflow. As usual in the cloud we gain scalability and high availability without the headache of maintenance.

ML Stack

Apart from the AWS native ML tools in Amazon SageMaker, AWS supports many opensource tools like MXNet, TensorFlow and PyTorch with managed services and Deep learning AMIs.