The Response from Generative AI depends on Our Intelligence more than the Intelligence within It

In-Short

CaveatWisdom

Caveat:

It is easy to type a question and get a response from the Generative AI, however it is important to get the right answer as per the context, because Large Language Models (LLMs) of Generative AI are designed to predict only the next word and they can hallucinate if they don’t get the context right or if they don’t have the required information with-in them.

Below is the screenshot of above example and response from Gen AI model in Amazon Bedrock

Wisdom:

- Don’t be 100% sure that what ever Generative AI says is true, many times it can be false, and you need to apply your critical thinking.

- It is important to understand the limitations of the Generative AI and frame our prompts to get right answers.

- Be specific with what you need and provide detailed and precise prompts.

- Give clear instructions instead of jargon and overly complex phrases.

- Ask open ended questions instead of questions for which the answers could be yes or no.

- Give proper context with purpose of your request.

- Break down complicated tasks into simple tasks.

- Choose the right model as per the task.

- Consider the cost factor for different models to perform different tasks. Sometimes traditional AI is much less costly than Generative AI.

In-Detail

In this post I will be using different LLMs available in Amazon Bedrock Service to demonstrate where the models can go wrong and show you how to write prompts in the right manner to get meaningful answer with the appropriate model.

Amazon Bedrock

Amazon Bedrock is a fully managed serverless pay-as-you-go service offering multiple foundational Generative AI model through a single API.

In this repo I have discussed on how to develop an Angular Web App for any scenario accessing the Amazon Bedrock from the backend. In this post I will be using Bedrock Playground in the AWS Console.

Understanding Basics

Tokens – These are basic units of text or code that LLMs use to process and generate language. These can be individual characters, parts of words, words, or parts of sentences. These tokens are then assigned numbers which, in turn, are put into a vector that becomes the actual input to the first neural network of the LLM.

Some thumb rules with respect to tokens are.

- 1 token ~= 4 chars in English

- 1 token ~= ¾ words

- 100 tokens ~= 75 words

Or

- 1-2 sentence ~= 30 tokens

- 1 paragraph ~= 100 tokens

- 1,500 words ~= 2048 tokens

Some common configuration options you find in Amazon Bedrock playground across models are Temperature, Top P and Maximum Length.

Temperature – By increasing the temperature, you can make the model more creative by decreasing it the model become stable and you get repetitive completions. By making temperature zero you can disable random sampling and deterministic results.

Top P – It is the percentile of probability from which tokens are sampled. If the value is less than 1.0 then you get only the corresponding top percentile of options are considered, this results in more stable and repetitive results.

Maximum Length – You can control number of tokens generated by the model by defining maximum number of tokens.

I have used the above parameters with different variations in different models and posting only the important examples in this post.

The art of writing prompts to get right answers from Generative AI is called Prompt Engineering.

Some of the Prompting Techniques are as follows:

Zero-Shot Prompting

Large LLMs are trained to follow instructions given by us. So we can start the prompt by giving the instructions first on what to do with the given information.

In the below example we are giving instruction in the starting to classify the text for sentiment analysis.

Few-Shot Prompting

Here we enable the model for in-context learning where we provide examples in the prompt which serve as conditioning for subsequent examples where we would like the model to generate a response.

In the below example the model Titan Text G1 is unable to predict the right sentiment with few shot prompts. It says model is unable to predict negative opinion!

The same prompt works with other model A21 Lab’s Jurassic-2 Mid. So you need to test and be wise before choosing the right model for your task.

Few-Shot Prompting Limitation

When dealing with complex reasoning tasks Few-Shot Prompting is not a perfect technique.

You can see below, even after giving examples, the model fails to give the right answer.

In the last group of numbers (15, 32, 5, 13, 82, 7, 1) which is question the odd numbers are 15, 5, 13, 7, 1 and sum of them (15+5+13+7+1=41) is 41 which is an odd number, but the model (Jurassic-2 mid) says, “The answer is True” and agrees that it is an even number.

The above failure leads to our next technique Chain-Of-Thought

Chain-Of-Thought Prompting

In Chain-of-Thought (CoT) technique we explain to the model with examples on how to solve a problem in a step-by-step process.

I am repeating the same example discussed in the Few-Shot-Prompting discussed above with a variation of explaining to the model how to group the odd numbers and their sum is even or not. After that stating the answer True or false.

In the below screen shots, you can see that the Models Jurassic-2 Mid, Titan Text G1 – Express and Jurassic-2 Ultra doesn’t do well even after giving the example with Chain-of-Thought. This shows their limitations. At the same time, we can see that Claude v2 does an excellent job in reasoning and arriving at the answer with the step-by-step process, that is Chain-of-Thought.

Zero-Shot Chain-Of-Thought Prompting

In this technique we directly instruct the model to think Step-by-Step and give answer in complex reasoning tasks.

One of the nice features of Amazon Bedrock is that we can compare the models’ side by side and decide the appropriate one for our use case.

In the below example I compared Jurassic-2 Ultra, Titan Text G1 – Express, Claude v2. And we can see that Claude v2 does an excellent job however the cost of it is also on the higher side.

So, its again our intelligence which defines which model to use as per the task at hand considering the cost factor.

Prompt Chaining

Breaking down a complex task into smaller tasks and output response of one small task used as an input to next task is called Prompt Chaining.

Tree-Of-Thoughts

This technique extends prompt chaining technique by asking the Gen AI to act as different personas or SMEs and then chain the responses from one persona as input to another persona.

Below are the screen shots of the example in which I have given a document stating about a complex cloud migration project of a global renewable energy company.

In the first step I have asked the model to act as a Business Analyst and give Functional and Non-Functional requirements of the project.

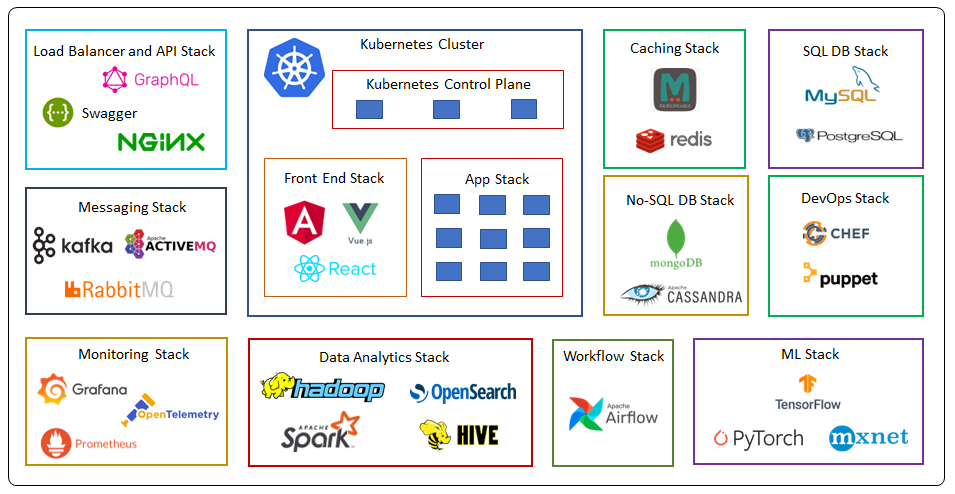

Next the response from the first step is given as input and asked to act as a Cloud Architect and give the Architecting considerations as per the functional and non-functional requirements.